Photonic Systems Integration

Laboratory

SCENICC - Soldier Centric Imaging Via Computational Cameras

U.S. forces are often immersed in a highly complex, rapidly evolving, hostile environment containing a diverse collection of potential threats. Despite significant recent advances in both the platforms (e.g., unmanned aerial vehicles) and the sensor payloads (e.g., very high resolution cameras) employed within the wide array of modern Intelligence, Surveillance, and Reconnaissance (ISR) capabilities, these conventional solutions do not currently provide the spatial, temporal or functional capabilities required by the individual warfighter.

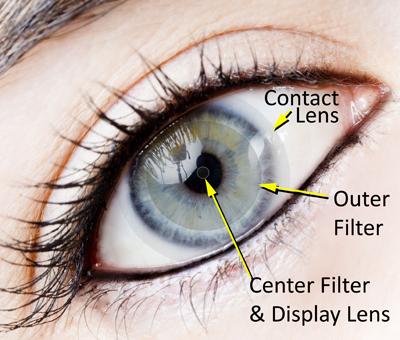

The vision of the Soldier Centric Imaging via Computational Cameras (SCENICC) program is to develop novel computational imaging capabilities and explore joint design of hardware and software to give warfighters access to systems that greatly enhance their awareness, security and survivability. The SCENICC program envisions a final system comprising both imaging and non-imaging optical sensors deployed both locally (e.g., soldier mounted) and in a distributed fashion (e.g., exploiting collections of soldiers and/or unmanned vehicles).

Research began in 2011 in fields such as computational imaging theory, data collection/conditioning, data extraction/processing and human interface. Capability demonstrations are planned in the areas of Hands-Free Zoom, Computer Enhanced Vision and Full Sphere Awareness

Project Members:

Joe Ford, Igor Stamenov, Stephen J. Olivas, Glenn Schuster, Nojan Motamedi, Ilya Agurok (UCSD PSILab)

Ron Stack, Adam Johnson, and Rick Morrison (Distant Focus Corporation)